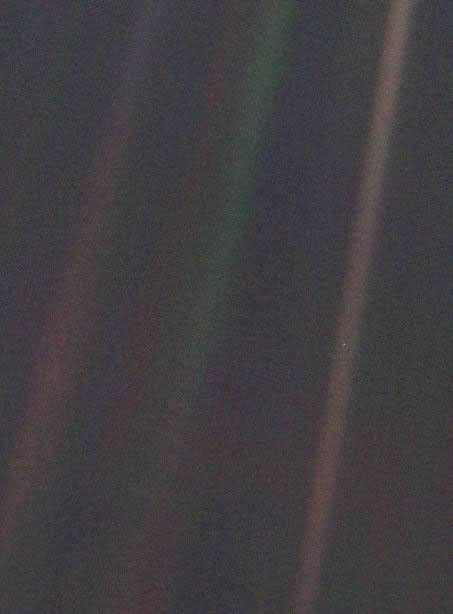

"Look again at that dot. That's here. That's home. That's us." — Carl Sagan, 1994

About

Hello, I’m Shivam, online I go by phyBrackets.

I’m a software engineer(sometimes?). At KDAB I work on customer projects built with Modern C++ and Qt, the kind of software where things actually need to work properly and run fast. I’ve also worked on Clazy, a static analyzer that finds Qt-specific bugs at compile time before they become your problem later, and on a physics engine.

In my school days I stayed away from computers completely. Other things felt way more interesting or just “doable” to me, physics, biology, chemistry, the kind of questions that are almost too big(no just quite cool) to even ask. Programming came late for me, and what kept me in it wasn’t the code itself, it was realising that it’s basically the same curiosity just showing up in different form. I like Programming now, where it can serve a larger purpose.

I also like to read Literature, it’s one of the few things that makes the world feel less relentless. I also collect books, which is either a hobby or a problem depending on how you look at my collection. There’s something about owning a book that’s different from reading it; half of them I haven’t gotten to yet, and I’m fine with that. They’re there when I need them. Right now I’m reading The City and Its Uncertain Walls by Murakami a world that exists somewhere between dream and memory, where the boundary between who you are and where you are starts to blur.

LLVM and debug information

Outside of work, I contribute to the LLVM compiler infrastructure, and the thing I’m focused on is how compiler optimizations quietly break debug information.

So here’s what happens. When you compile your code with -O2 or -O3, Clang does a lot of work under the hood, inlining functions, moving instructions around, pulling variables out of memory into registers, throwing away computations it knows are pointless. The final machine code often looks nothing like what you wrote, and that’s fine, that’s the whole idea. But there’s a format called DWARF that gets embedded in your binary alongside the machine code, and its job is to act like a map between that transformed code and your original source. So when you fire up GDB or LLDB, it can still show you where you are, what your variables hold, what called what.

The problem is that a lot of the optimization passes in LLVM’s pipeline just don’t bother keeping that map updated when they change things. A pass inlines a function, or reassociates some arithmetic, or promotes a local variable into a constant, and it just drops the little #dbg_value markers that were tracking your variables through those changes. The DWARF that ends up in the binary is then missing things or just wrong. So your debugger shows <optimized out>, or jumps to the wrong line, or shows a value that stopped being true several optimizations ago.

What I find interesting is that it’s not one clean problem you can fix in one place. It’s a lot of small failures spread across many passes, each one a bit different. I’m learning how optimization affects debug information at each level of the pipeline, there’s more going on here than it looks from the outside, with several RFCs active in this space and a lot of ongoing work across the community. I contribute to debug info in LLVM, and the more I dig into it the more there is to find.

RISC-V

I’m also interested in RISC-V, an open and free instruction set architecture. Most ISAs you run into are really old and carrying a lot of history with them. x86 has been accumulating instructions and weird special cases since the late 1970s. RISC-V starts from scratch, a small clean base with 47 instructions you can read through and actually understand.

What makes it really interesting is that it’s open. Anyone can build chips with it without paying anyone for the privilege. LLVM has nice RISC-V backend support too, so it connects naturally to the compiler work I’m interested in.

Consciousness and the big questions

Outside of all that, I think about neuroscience and consciousness a lot, specifically the hard problem of why there’s something it feels like to be a brain doing computations, when most physical processes just don’t feel like anything. I’ve gone deep on quantum mechanics and a bunch of other rabbit holes over the years(like building Radio Telescope). Some stuck. Most of them at least changed how I think about the next thing I picked up.

I find it genuinely hard to stay uninterested in something once I understand even a little bit of it.

This is a digital garden — notes in different stages, some rough ideas, some more worked out.